I have been using AI almost every day for the better part of two years. I build things with it. I think alongside it. I have written at length about what it can and cannot do. So when I say that putting generative AI in front of primary school children gives me pause, I want to be clear about where that is coming from.

It is not coming from fear.

It is coming from someone who knows exactly what this technology does to the way you think, and who read the Straits Times piece on this very carefully before having an opinion about it.

Today, I decided to take a break from a look back at my career so far to address this.

What the Article Actually Says

The ST report published on 20 April 2026 is more nuanced than the headline suggests.

I thought it’s worth being honest about that before I push back on any of it.

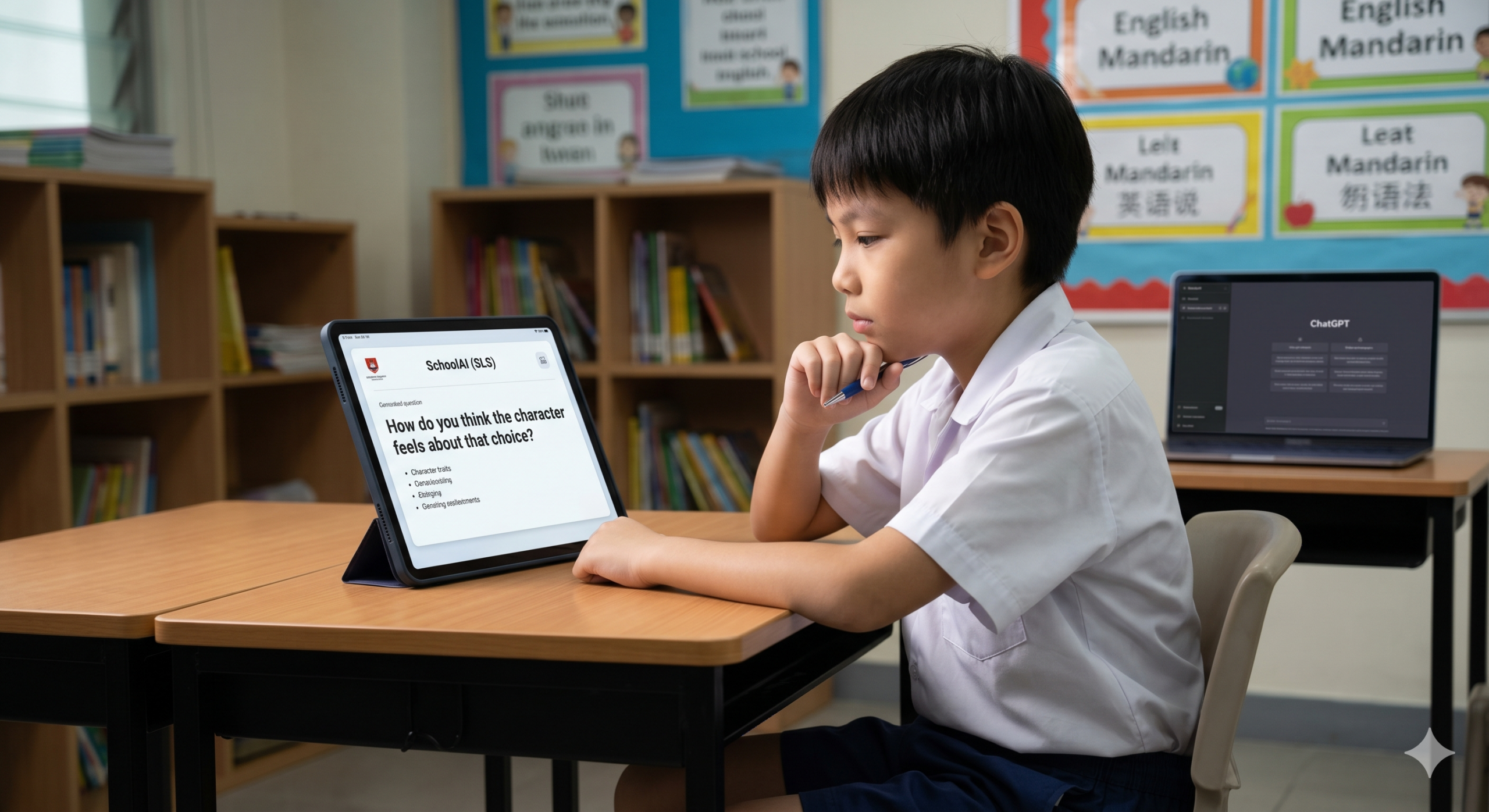

The AI tools being used in MOE primary schools are not ChatGPT. They are purpose-built. Accessed through the Singapore Student Learning Space. Whitelisted by MOE. Used only under teacher supervision.

The English teacher quoted in the piece describes her tool, SchoolAI, in terms that are genuinely encouraging.

It does not give pupils answers. It asks them questions. It uses what she calls Socratic questioning to prompt deeper thinking about character dilemmas and plot development before children put pen to paper.

She says: “It’s about getting into the habit of thinking. The AI won’t write the story for them.”

So far so good.

That is quite a defensible use of the technology.

So what is my concern then?

And here’s where the Greta Thunberg pops in with a loud “How Dare You?! Are you disagreeing with experts at Singapore’s Ministry of Education (MOE)?”

Hold up, Greta, because my concern is not with the Socratic model.

My concern is what sits underneath it, and what we might be quietly taking away while we are busy adding something new.

The Research Problem Nobody Is Talking About

Let me say something that is going to date me considerably.

I grew up wrestling with the Dewey Decimal System.

If you needed to know something, you went to the library. You navigated a card catalogue or a classification system that made no immediate sense and rewarded patience. You pulled three books off the shelf, found that one of them was not quite right, followed a footnote in another, and ended up somewhere you did not expect which, admittedly, was often more useful than where you intended to go.

Nobody is asking for those days back.

I am certainly not.

Oh, hell, no!

But something happened in that process that I do not think we talk about enough.

You learned to search.

You learned to evaluate.

You learned that not every source is equal, that the answer to your question is rarely on the first page you open, and that the act of looking, the navigation, the dead ends, the unexpected detour, is part of how knowledge actually settles into your head.

That is not nostalgia.

That is cognitive science.

The struggle to find information is part of how we learn to think about information. When you have had to work to locate something, you are more likely to interrogate it when you get there.

You have skin in the game.

You have earned the right to an opinion about it.

AI removes that friction almost entirely.

Ask it anything.

It knows.

It answers.

Fluently, confidently, and in the kind of complete, well-structured prose that a primary school teacher would give full marks for.

The problem is not that the answer is wrong, though sometimes it is.

The problem is that the child did not have to think their way to it.

What Gets Lost in the Shortcut

Critical thinking is not a skill you can be taught in a lesson about critical thinking.

It develops through practice.

Specifically, through the repeated experience of encountering information, questioning it, looking for corroboration, finding contradictions, and arriving slowly and imperfectly at a position you can defend.

That process requires friction.

It requires the slight discomfort of not knowing.

The patience to sit with a question before reaching for an answer.

The discipline of checking a second source, and then a third, not because a teacher told you to, but because you have learned, from experience, that the first source is not always sufficient.

These are habits. And like all habits, they are formed early or they are formed with great difficulty later, if they are formed at all.

A ten-year-old who learns that AI can answer any question, in any subject, at any time, is a ten-year-old who is forming a different habit entirely.

Not a bad one, necessarily. But a different one.

And I am not sure we have been honest enough about the trade.

The ChatGPT Homework Incident

The ST article contains a detail that should not be buried.

A Primary 5 pupil was assigned homework by his mother tongue teacher requiring the use of ChatGPT at home. To generate ideas for a composition. To clean up grammatical errors.

The child had never used it before. The parents restrict device access at home (good parents, I say!).

Nobody told them this was coming.

“I am not comfortable with my son using ChatGPT unsupervised,” said the mother. “I feel this is not the age, perhaps when he is a bit older or in secondary school.”

She is absolutely right.

But my concern goes beyond the supervision question.

The task itself, using AI to generate ideas, then clean up the grammar, is a task that removes two of the most important parts of writing.

The part where you struggle to find what you want to say.

And the part where you learn, through repeated error, what correct grammar actually looks and feels like.

What remains is the submission.

That is not writing.

That is formatting.

And it doesn’t help anyone, much less a ten-year-old.

What I Would Rather See

I am not calling for AI to be removed from primary schools entirely.

The Socratic questioning model described in the article is worth studying carefully. If the tool genuinely prompts thinking rather than replacing it, that is a meaningful distinction.

But I want the conversation to be honest about what we are asking children to trade.

The ability to sit with a question.

To not know, and to find that tolerable.

To look for answers in places that require navigation and judgment.

To read something, evaluate it, disagree with it, and find something better.

Those are not quaint skills from the card catalogue era.

They are the foundation of every kind of thinking that matters, including the thinking required to use AI well.

A child who has never learned to research will not suddenly develop that habit when they are older and the stakes are higher.

They will reach for the tool that gives them the answer fastest.

Which is exactly what we are teaching them to do.

Mr Ramesh Kumar, the cybersecurity consultant quoted in the article, said schools should inform parents about how AI is used in lessons so they are aware of what their children are exposed to.

That, to me, is the minimum.

What I would add is this: before we decide how to introduce AI to children, we should be very clear about what we are asking them to give up in exchange.

UNESCO recommended a minimum age of 13 for generative AI in classrooms. Singapore is starting at ten.

That gap needs a better justification than the ambition to prepare students for a technology-forward future.

Preparation cuts both ways.

You can prepare a child to use a tool.

Or you can prepare them to think.

Ideally, you do the second one first.